|

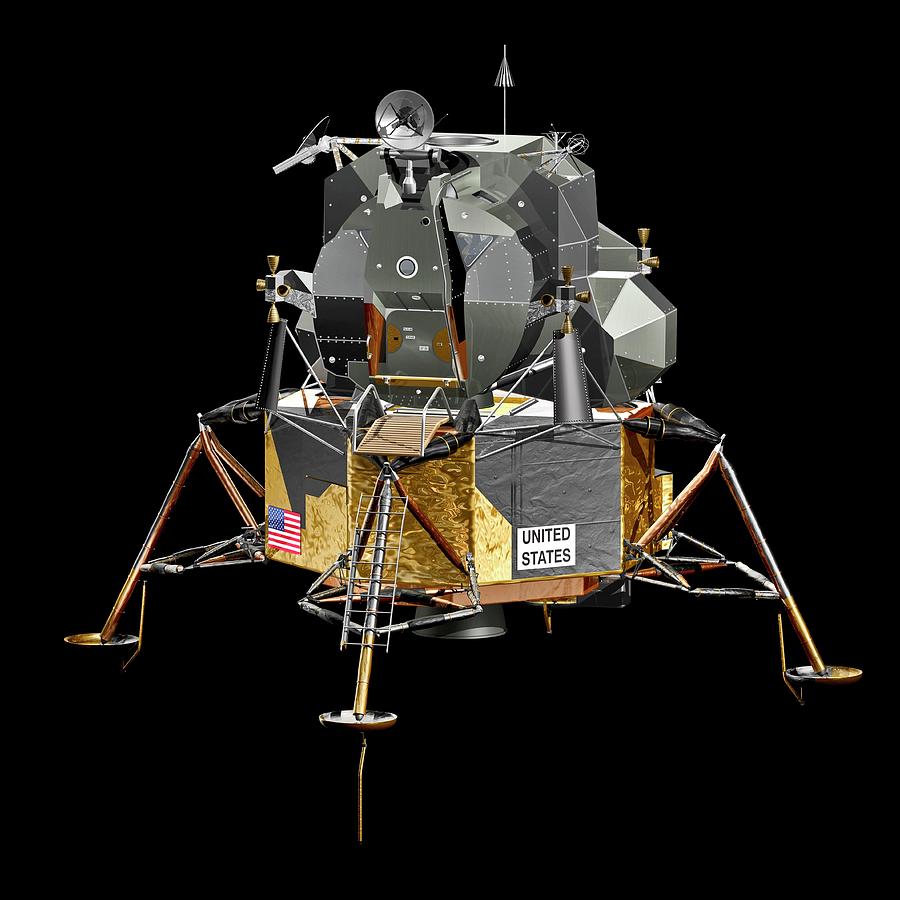

3/14/2023 0 Comments Lunar lander

The activation function between the layers is a rectifier (ReLU), and there's no activation function for the output layer. The experience gained by the agent while acting in the environment was saved in a memory buffer, and a small batch of observations from this list was randomly selected and then used as the input to train the weights of the DNN -a process called "Experience Replay." The network uses an Adam optimizer, and its structure consists on an input layer with a node for each one of the 8 dimensions of the state space, a hidden layer with 32 neurons, and an output layer mapping each one of the 4 possible actions for the lander to take. I used TensorFlow for the implementation of the DNN.

Instead, a DQN uses a Deep Neural Network (DNN) for approximating the Q*(s, a) function getting around the limitation of the standard Q-learning algorithm for infinite state spaces. The Lunar Lander environment has an infinite state space across 8 continuous dimensions, which makes the application of standard Q-learning not possible unless the space is discretized -which is inefficient and not practical for this problem. Below I'm exploring the different decisions to construct a successful implementation, how some of the hyperparameters were selected, and the overall results obtained by the trained agent. This implementation is inspired in the DQN described in (Mnih et al., 2015) where it was used to solve classic Atari 2600 games. I'm using an implementation of a Deep Q-Network (DQN) to solve the Lunar Lander environment. A successfully trained agent should be able to achieve a score equal to or above 200 on average over 100 consecutive runs. This environment consists of a lander that, by learning how to control 4 different actions, has to land safely on a landing pad with both legs touching the ground. Here is an implementation of a reinforcement learning agent that solves the OpenAI Gym’s Lunar Lander environment. Whatever it takes to make things run better. To view a copy of this license, visit or send a letter to Creative Commons, 559 Nathan Abbott Way, Stanford, California 94305, USA.Ĭommercial licenses are also available.Agent = Agent(training, LEARNING_RATE, DISCOUNT_FACTOR, EPSILON_DECAY)įeel free to change these values or include your own. This work is licensed under the Creative Commons Attribution-NonCommercial-ShareAlike 3.0 License. Center for Connected Learning and Computer-Based Modeling, Northwestern University, Evanston, IL. If you mention this model or the NetLogo software in a publication, we ask that you include the citations below. The frame rate setting is used to control the speed of the game. See its entry in the NetLogo Dictionary, and also. This model uses the random-poisson reporter to create the terrain. Try to write a robot pilot that will automatically land the module safely. It would be more realistic if these crashes were detected.Īdd levels to the game by continually making the terrain bumpier, the platform smaller, or by some other method of making the game more difficult, perhaps alien spaceships. For example, you might plot the position, velocity, and/or acceleration of the module, in the plane or just on the Y axis.Ĭurrently, collisions with the edges of the module are not detected, so you can graze the side of a peak with the edge of the module without crashing. EXTENDING THE MODELĪdd one or more plots to the model. Increase the THRUST-AMOUNT to make the game harder. Try to land the module with the fewest adjustments. When terrain-bumpiness is very high some of the randomly generated surfaces are not navigable. THRUST-AMOUNT controls the magnitude of the force of your rockets. More bumpiness may mean you will have large obstacles to maneuver around. TERRAIN-BUMPINESS controls the variation in the elevation of the lunar surface. PLATFORM-WIDTH controls the width of the blue landing pad created at setup, a wider landing pad makes an easier target. THRUST fires your rockets according to your current tilt. LEFT and RIGHT tilt the module back and forth Be ready the module will start descending fairly quickly. SETUP starts the game over by creating a new surface for you to navigate and poising your module above that surface, ready for descent. You have the ability to tilt right and left. You have one thruster that exerts a force depending on the tilt of the module. The lunar module is fragile, so you have to be moving extremely slowly to prevent damage when you touch down. The object of the game is to land the red lunar module on the blue landing pad on the surface of the moon without crashing or breaking the module. This model is based on the arcade game, Lunar Lander. You can also Try running it in NetLogo Web If you download the NetLogo application, this model is included.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed